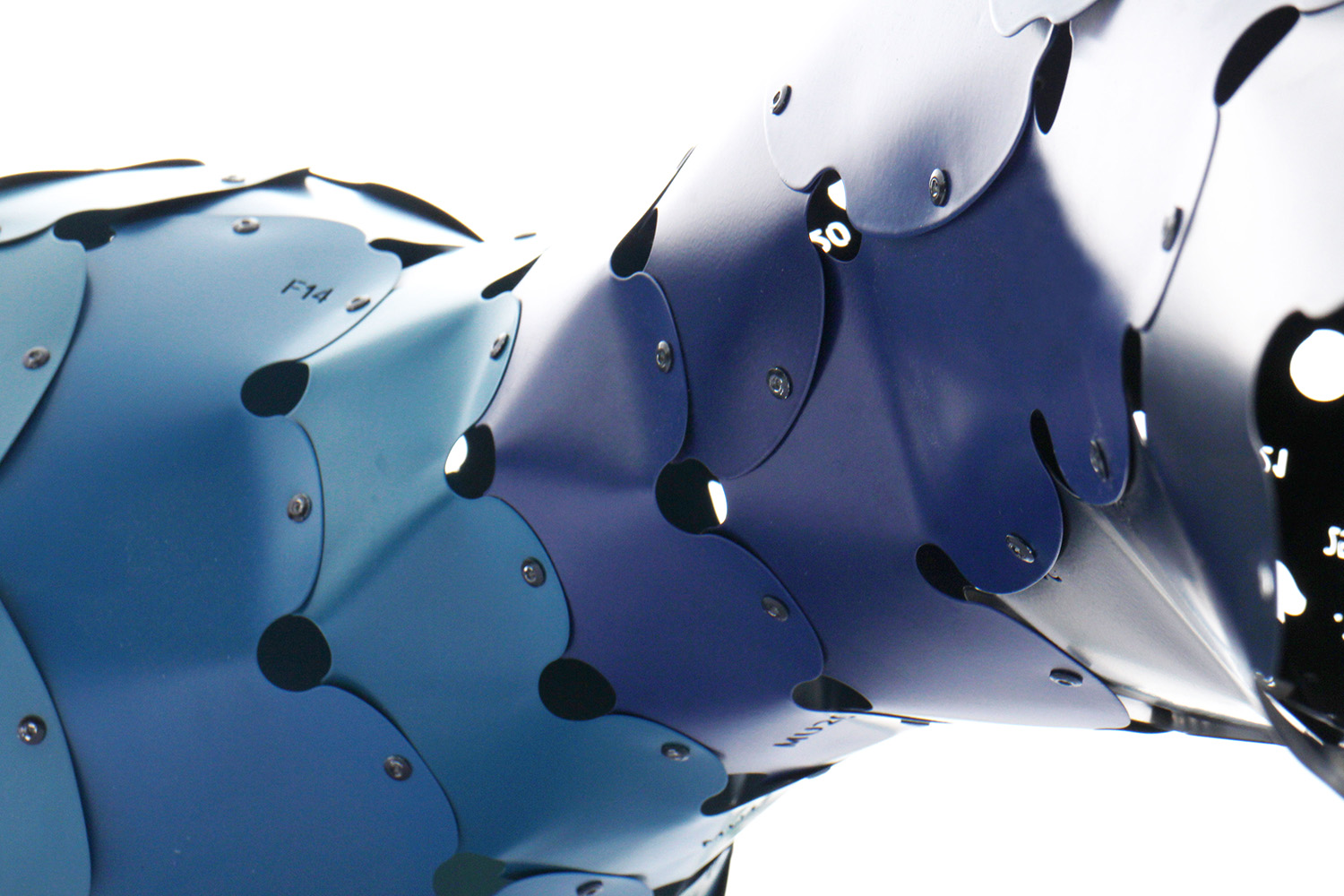

A few months ago, OpenAI released Point-E, a cloud-based text-to-3d image generator. Shap-E is an improvement over the previous, point cloud-based Point-E, with more realistic textures and lighting.

Shap-E revolutionizes 3D model generation by employing an encoder to transform 3D objects into mathematical representations known as implicit functions. Subsequently, a diffusion model is trained to generate novel 3D models utilizing these representations. The outcome is a collection of models characterized by enhanced realism in textures and lighting effects, all accomplished at an accelerated pace when compared to the models generated by Point-E.

OpenAI asserts Shap-E can outperform Point-E in terms of “comparable or better” performance. Here is Shap-E’s GitHub page.

Point-E adopts an alternative technique, using diffusion models to build 3D point clouds that are then transformed into meshes. A point cloud is a collection of discrete points in 3D space that collectively reflect an object’s shape without providing explicit information about its surface or structure. Although the Point-E results are stunning as a technological showpiece, they are rather primitive and low-resolution in terms of detail.