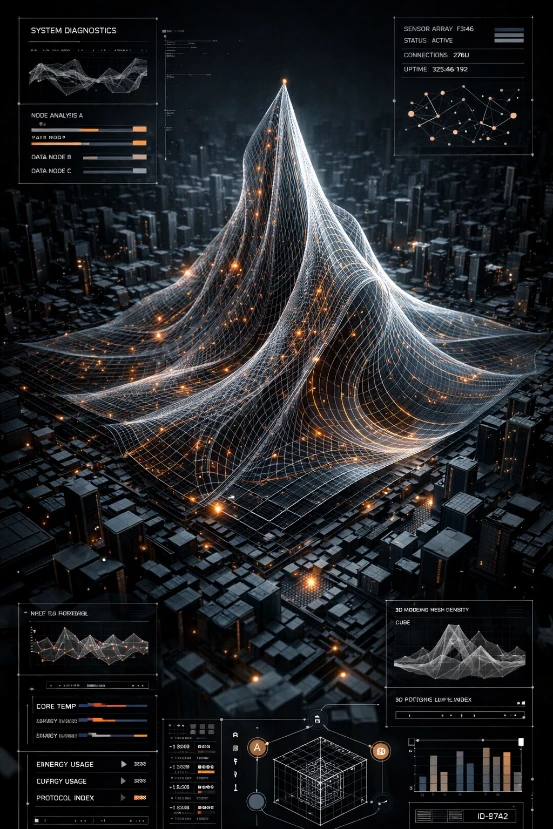

Meta has launched the image segmentation model and its entire dataset. While Meta named the tool it developed as Segment Any Model (SAM), it also made SA-1B Dataset, the largest segmentation dataset ever.

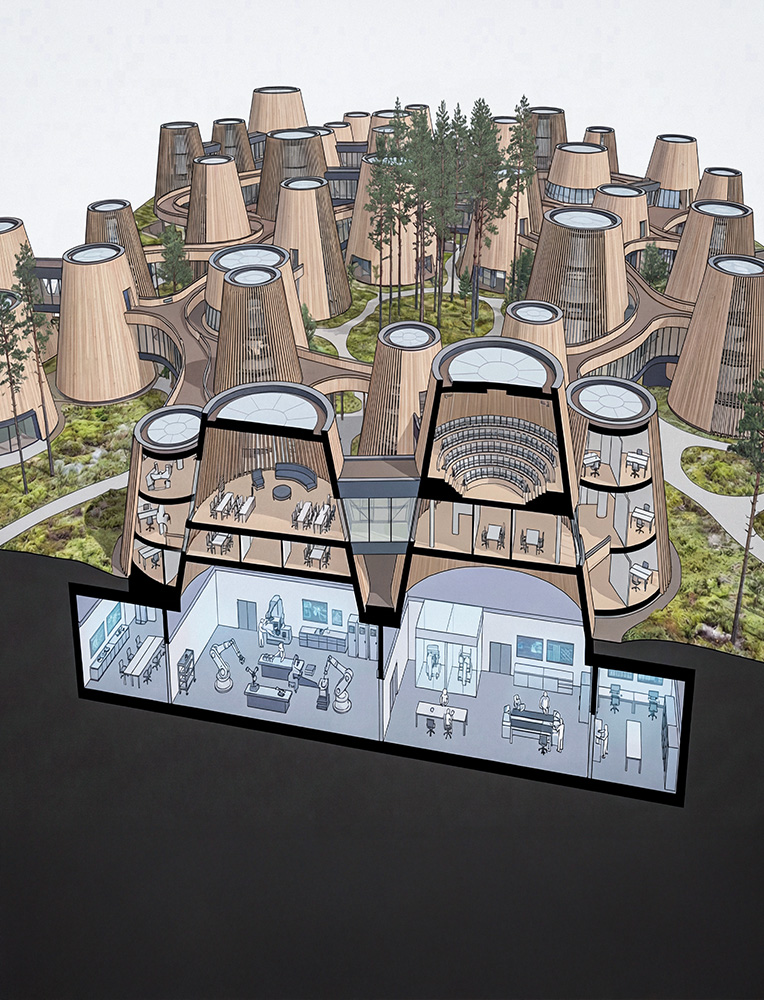

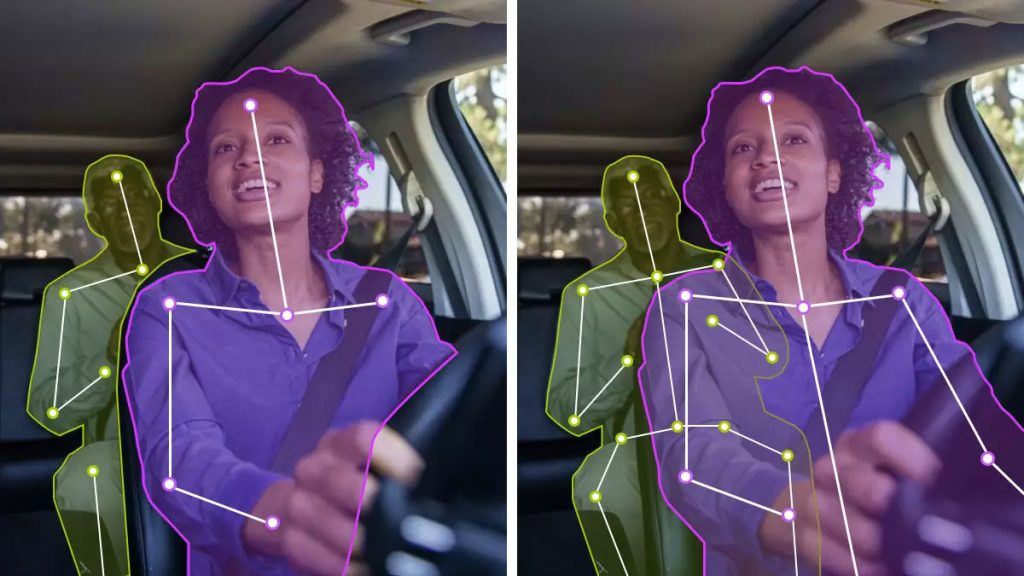

SAM allows users to segment objects with just a click, or by interactively clicking on dots to include and exclude the object. SAM can automatically detect and mask all objects in an image. After precomputing the image embedding, SAM can generate a segmentation mask for any request in real-time, allowing real-time interaction with the model.

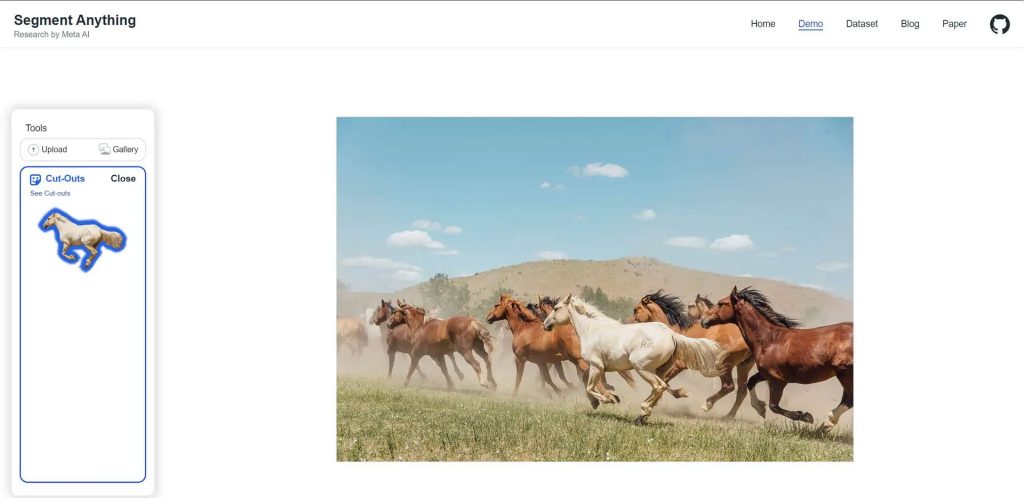

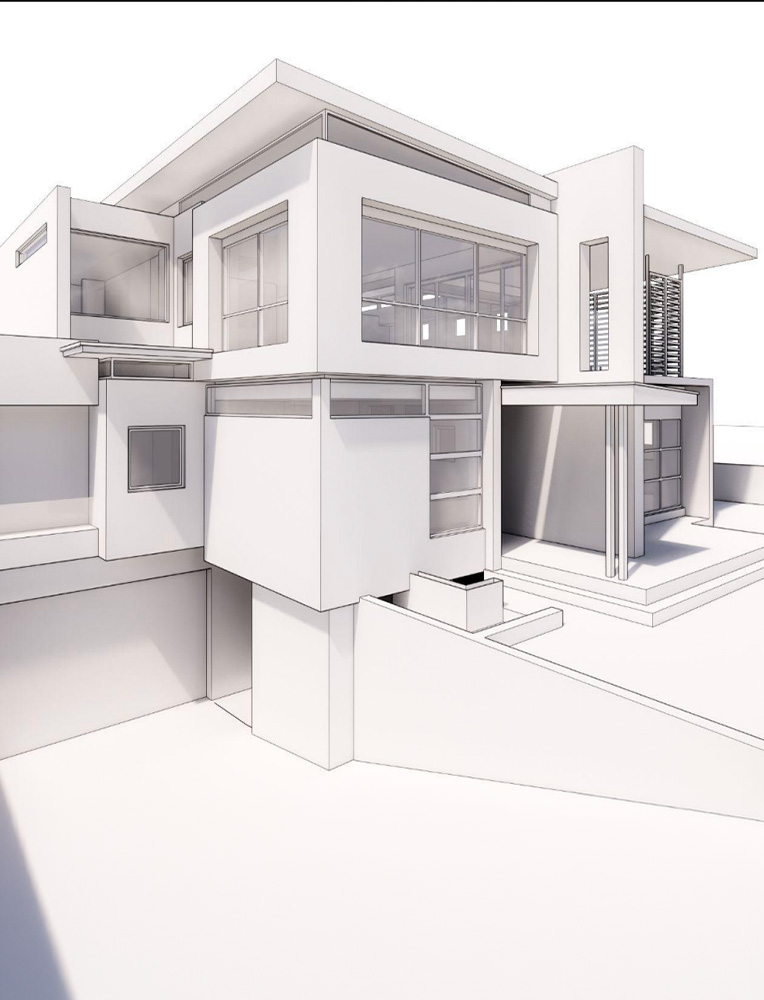

The Segment Anything model can detect objects in images and videos even if they are not part of the training set. It also allows text inputs. For example, you added an image and wanted to select the horses found in that image. It is enough to write “horse” in the input section. Also, Segment Anything can also work with other models. It can help to 3D recreate an object using a single image, or it can take advantage of images taken from a mixed-reality headset.

Meta has also published a demo for Segment Anything. You can experience the model through existing images or uploaded images. Although this model is not a tool that we use frequently in our daily lives, it can be a vital tool added to the infrastructure of the systems we use. The first thing that comes to mind is the addition of such models to social media platforms. These tools can even be used to determine whether an image is real or fake.

Explore Courses

.jpg)